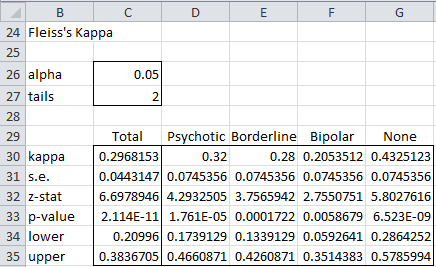

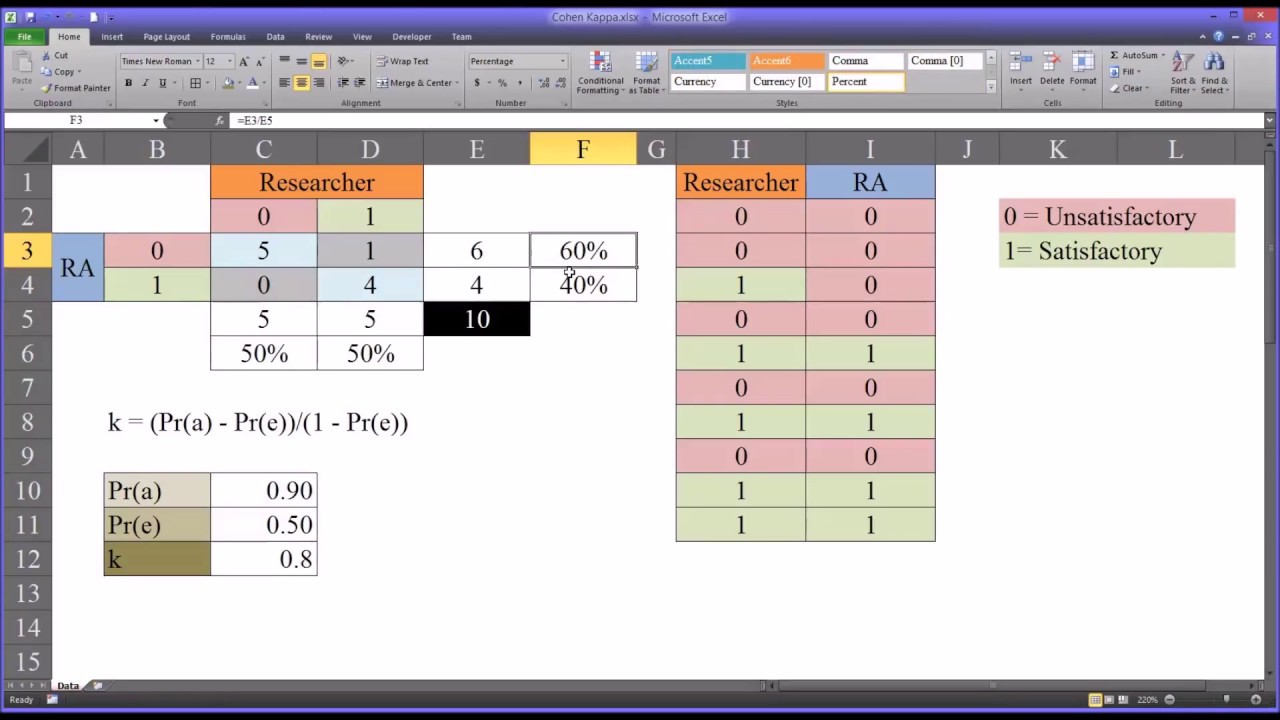

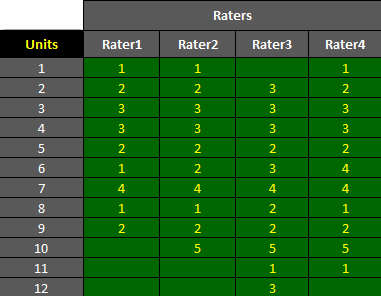

AgreeStat/360: computing weighted agreement coefficients (Conger's kappa, Fleiss' kappa, Gwet's AC1/AC2, Krippendorff's alpha, and more) for 3 raters or more

Fleiss' Kappa for the agreement. Each bar represents the agreement on... | Download Scientific Diagram

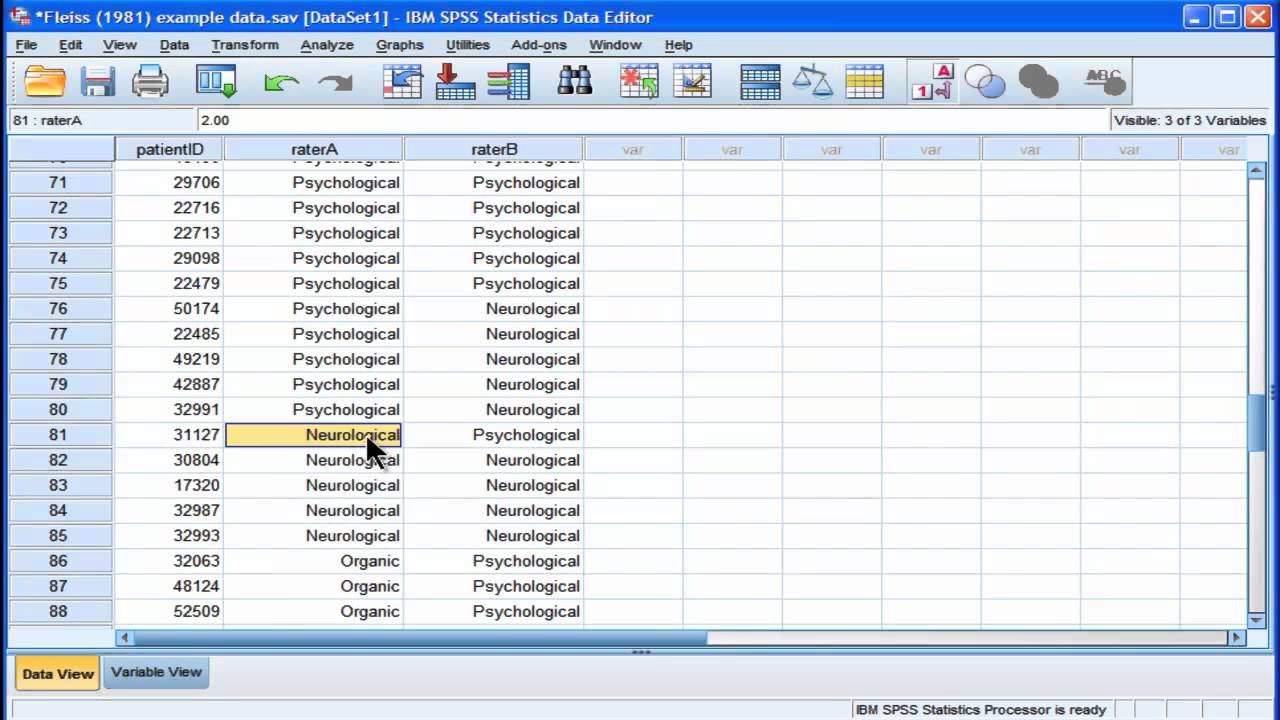

The Equivalence of Weighted Kappa and the Intraclass Correlation Coefficient as Measures of Reliability - Joseph L. Fleiss, Jacob Cohen, 1973

![Interpretation of Fleiss' kappa (k) from Landis and Koch [9]. | Download Scientific Diagram Interpretation of Fleiss' kappa (k) from Landis and Koch [9]. | Download Scientific Diagram](https://www.researchgate.net/profile/Martine-Etoga-2/publication/344272481/figure/tbl1/AS:945910032908288@1602533934639/Interpretation-of-Fleiss-kappa-k-from-Landis-and-Koch-9_Q320.jpg)